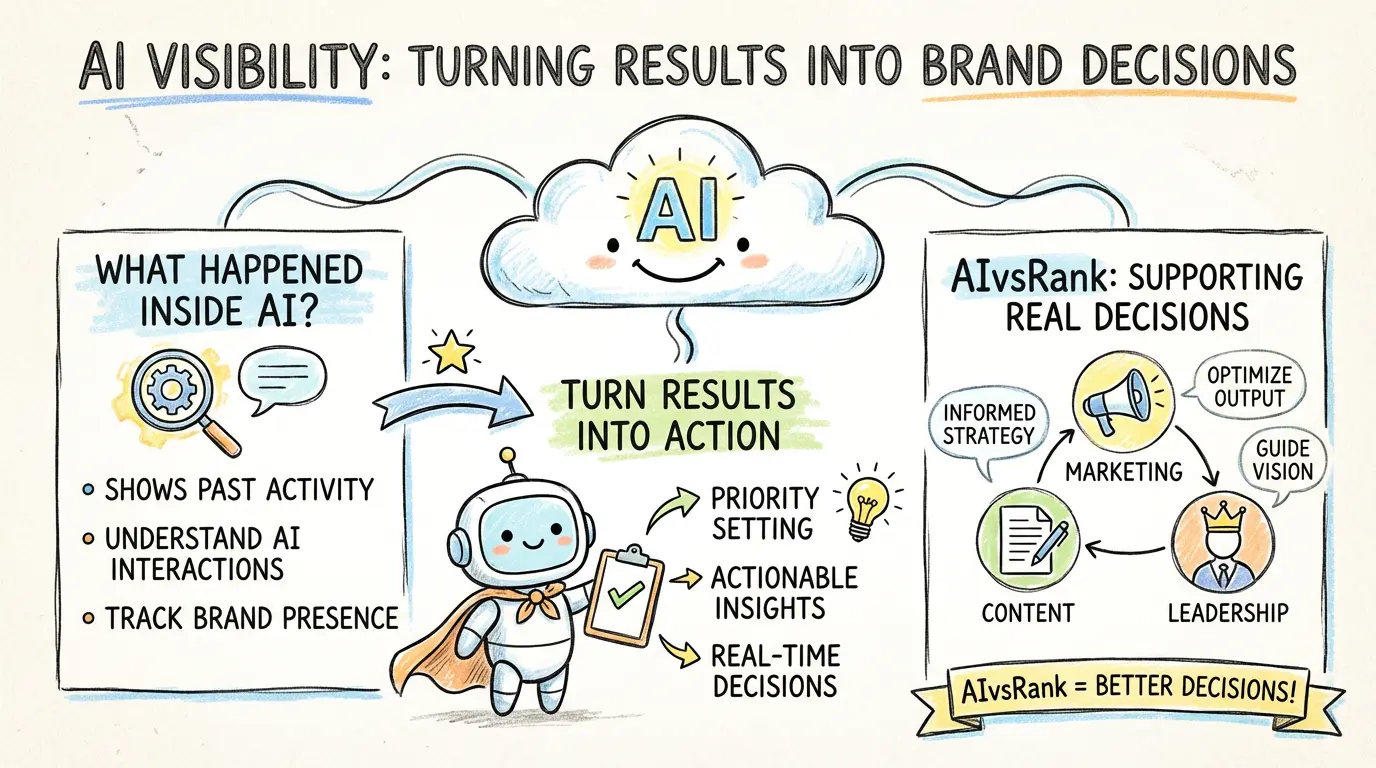

AI visibility helps brands make decisions because it turns AI outcomes into clearer priorities, not just more monitoring data. The real value is not simply seeing what happened to the brand in AI. It is using those results to decide what should be fixed first.

How AI visibility software supports brand visibility decisions

AI visibility software is useful when it connects four signals: whether a brand is mentioned, how often it appears beside competitors, which answer engines surface it, and what content gaps keep it out of AI-generated answers. For teams comparing brand visibility tools, AIvsRank turns those signals into a workflow: inspect public AI leaderboards, review recurring visibility signals in AI visibility tracking, and decide whether the next fix belongs in content, positioning, or pricing. If the gap is content structure, start with why traditional blog formats underperform in AI-first search before rewriting more pages.

If the first article answered why AI visibility matters now, and the second explained what AIvsRank AI visibility measures, this one addresses the business question that follows: once clients receive those results, how do they actually use them?

What companies need is not just another set of AI mention data. They need to judge problems faster, set priorities faster, and reduce blind revision.

That is why the bigger value of AI visibility is not simply seeing the result. It is turning the result into business action.

Which Teams Use These Results

AI visibility results are not for one person alone. Different roles inside a company use the same results to make different decisions.

- marketing teams use them to judge whether the brand is being expressed correctly in AI and whether external brand language should be corrected first

- content teams use them to decide which problem scenarios deserve priority and which pages or expressions are most likely to cause AI misunderstanding

- leadership teams use them to judge competitive pressure at the AI entry point and decide whether resources should go first to brand language, content reinforcement, or competitive-context correction

That is why the value of AIvsRank AI visibility is not just providing a report. It turns the result into decision input that different teams can actually use.

What Clients Really Use the Results to Decide

These judgments do not come directly from one isolated answer. They come from repeated outcomes across multiple problem scenarios, combined with signals of brand misunderstanding and competitive context. In other words, AIvsRank is more interested in which issues keep recurring across scenarios than in what happened accidentally in one response.

For clients, the most important thing is not seeing more information. It is answering a few key questions more quickly:

- which high-value problem scenarios the brand is absent from

- whether misunderstanding is mainly caused by capability expression or by category confusion

- which competitors create real pressure inside AI comparison contexts

- whether the current priority should be brand language, content structure, or competitive framing

Once those questions are answered, AI visibility stops being a monitoring output and starts becoming decision input.

How Clients Move From Results to Action

For clients, the real issue is not simply seeing the problem. It is whether the problem can be turned into action quickly. A business-realistic path usually looks like this:

- identify which problem scenarios the brand is absent from or weakly positioned in

- judge whether the issue looks more like a brand-language problem, a content-structure problem, or a competitive-context problem

- set priority based on impact and fixability

- direct limited resources first toward the areas most likely to change AI-generated answers

Priority here is usually not set by counting how many problems exist. It is judged by three factors together: whether the issue keeps recurring, whether it affects high-value problem scenarios, and whether there is a relatively clear path to fix it.

The value of AIvsRank AI visibility is helping clients shorten that path instead of leaving the team at the stage of simply knowing the problem exists.

A Minimum Scenario: How a Result Turns Into Priority

Consider a B2B software brand that finds it is not fully absent from AI answers, but is repeatedly compared with broader, more general platforms. As a result, its core advantage is diluted in the comparison context.

For the marketing team, that means the biggest risk is not that nobody mentions the brand, but that the brand difference is not being explained clearly when AI does mention it. For the content team, it means they should strengthen the problem scenarios most likely to enter comparison contexts instead of spreading effort evenly across every page. For leadership, this kind of result directly affects resource allocation: should the company keep expanding content volume, or should it first fix brand language and differentiated positioning?

That also means the team's next move should not be to keep producing more generic content. It should first fix how the brand difference is expressed inside those comparison scenarios.

The same data leads to different actions for different teams, but all of them point toward clearer priorities rather than more vague tasks.

These Results Are Not a One-Time Snapshot

For many clients, AI visibility is not something they look at once and then forget. More often, it becomes a way to track how the brand's position changes in AI over time.

For example, once a team has unified brand language, strengthened high-value problem scenarios, or adjusted competitor assumptions, the next question is whether absence, misunderstanding, and comparison pressure inside AI have actually changed.

That means AI visibility is not just a one-time diagnosis. It can become an ongoing business signal. It helps teams judge:

- which problems have already been corrected

- which problems still keep recurring

- which competitive pressures are rising or falling

- which changes actually improved AI-generated answers

That continuity makes AI visibility closer to a long-term observation capability than to a one-time snapshot.

A More Business-Realistic Decision Path

Suppose a brand's main issue in AI answers is not complete absence, but repeated placement in the wrong comparison context. In that case, the first priority is usually not to produce more content. It is to unify brand language, clarify capability boundaries, and then reinforce the problem scenarios most likely to trigger comparison.

If another brand's main issue is absence from high-value problem scenarios, the priority changes. That team needs to strengthen the problem spaces that directly affect entry into the consideration set instead of trying to revise every page equally.

Some brands do appear regularly, but their problem is understanding quality rather than appearance rate. AI mentions them, but describes them too vaguely or places them into categories that are too broad. For those clients, the more effective priority is often to fix brand language and capability boundaries before creating more general content.

That difference is exactly where AI visibility starts to matter at the decision layer. It helps teams decide:

- what to fix first

- what can wait

- where resources should go first

- which actions are most likely to change AI-generated answers

In that sense, AIvsRank AI visibility does not create more scattered actions. It creates a clearer action order. That is also a more central part of its value: it moves brand performance in AI from "what happened" to "what should be done first," allowing the result to enter real decision workflows across marketing, content, and leadership.