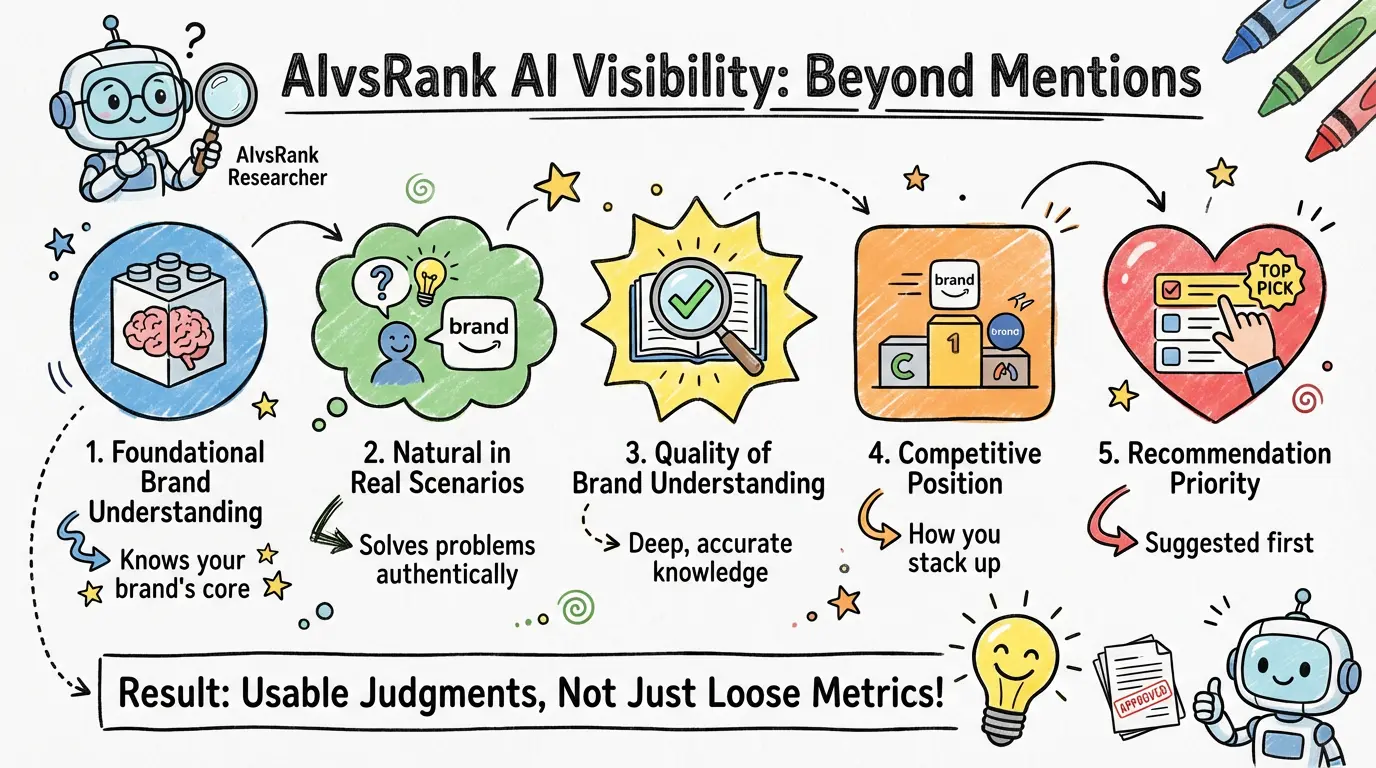

AIvsRank AI visibility measures more than whether a brand is mentioned by AI. It evaluates brand performance across five layers: foundational brand understanding, natural appearance in real problem scenarios, quality of AI understanding, competitive position, and recommendation priority.

That distinction matters because many products talk about AI visibility while still showing clients only surface-level mention results. AIvsRank is designed to answer a broader question: how a brand is seen in AI, how it is understood, which alternatives it is grouped with, and how those outcomes can be turned into decisions a team can actually use.

These five layers are the core observation logic of AIvsRank AI visibility and a practical framework for helping clients understand a brand's real position inside AI.

These five layers are not an industry-wide standard. They are a working framework AIvsRank uses when continuously observing brand performance in AI. The framework comes from combining three lines of judgment: whether a brand's foundational expression is stable, whether the brand naturally enters real problem scenarios, and whether the brand is placed in the right competitive context when it is mentioned. Publicly, we compress that logic into five layers so clients can understand the results more easily and turn them into action more quickly.

Layer 1: Foundational Brand Understanding

At this layer, AIvsRank looks at whether the brand's foundational information is complete, clear, and stable enough for AI systems to absorb consistently. The focus is not on how much material exists. The focus is on whether the brand's category, core problem, target user, and capability boundaries are clearly expressed.

At this layer, we look at whether the brand's category, core problem, target user, and capability boundaries stay stable across different outward-facing expressions, and whether they allow AI systems to form a consistent understanding.

What the client gets is not just an abstract judgment. They receive a foundational brand-understanding profile that can serve as the baseline for later analysis. It helps the team confirm which parts of the brand's external expression are already stable and which parts are still likely to create AI interpretation errors.

The value of this layer is that it gives every later AI visibility judgment a shared reference point, reducing misreads caused by inconsistent self-description.

Layer 2: Natural Appearance in Real Problem Scenarios

At this layer, AIvsRank is not focused on the brand keyword itself. It looks at whether the brand enters AI answers naturally in real demand-driven questions, and if it does, where it tends to appear.

At this layer, we look at the brand's appearance rate and relative position across multiple real problem scenarios instead of checking only whether it appears in one isolated question.

What the client sees is the brand's natural appearance across different problem scenarios, including where it appears consistently, where it is completely absent, and where it appears but without an advantageous position.

This helps the team answer an important question: is the brand simply explained clearly on its own website, or is it actually likely to surface first in real AI question-and-answer contexts?

Layer 3: Quality of Brand Understanding

At this layer, AIvsRank looks at whether AI actually understands the brand when it mentions it. That includes whether the core capability is captured correctly, whether the description is clear, whether the product tier is accurate, and whether the understanding is stable.

At this layer, we look at whether AI captures the brand's core capability, whether the explanation becomes too generic, and whether the brand is placed into the wrong category or tier.

What the client sees is a judgment about brand-understanding quality. The team can use it to determine whether the brand is visible but misunderstood, or whether it is being interpreted as something too vague or simply wrong.

The value of this layer is that it moves the conversation from whether the brand appears to whether it appears correctly, helping clients identify AI performance that looks like exposure on the surface but is ineffective or even risky underneath.

Layer 4: Competitive Position

At this layer, AIvsRank looks not only at the brand itself but at the brand's relative position in the competitive environment. That includes which competitors co-occur most easily, which alternatives create more direct pressure, and whether the brand is in a weak position inside comparison contexts. This layer does not rely only on AI answers. It also uses external search-environment signals, such as SERP context, to help explain why certain comparison relationships keep recurring.

At this layer, we look at which competitors co-occur most frequently with the brand, which comparison contexts repeat, and whether those comparison relationships are reinforced both by AI answers and by external search signals.

What the client sees is a set of competitive judgments that are closer to market reality than a single visibility metric. The team can more quickly identify which companies are core competitors, which are direct competitors, which are the real candidates displacing the brand inside AI environments, and which comparison contexts currently make the brand most vulnerable.

The value of this layer is that it moves the work from brand monitoring to market judgment, helping clients connect AI visibility to competitive strategy. At the same time, AIvsRank also lets users add competitors they believe matter, making the analysis closer to the real business environment instead of relying only on automatic system detection.

Layer 5: From Judgment to Recommendations

At this layer, AIvsRank turns the earlier observations into recommendation judgments that are closer to execution priority. The goal is not generic advice. It is to help the client decide what should be fixed first.

At this layer, we combine the recurring problem patterns, stability gaps, and sources of competitive pressure surfaced in the earlier layers to judge which type of fix deserves priority.

What the client sees is not only what happened, but also a clearer direction for action. For example, they can judge whether the highest priority is brand language, content structure, product-tier description, or differentiation inside a competitive context.

The value of this layer is that it shortens the distance between finding the problem and setting the priority, allowing AI visibility to move beyond monitoring and into execution.

A Minimum Scenario: The Brand Appears, but Enters the Wrong Comparison Set

Consider a SaaS brand that consistently presents itself on its website as an AI workflow platform, but is repeatedly grouped in AI answers as an automation tool. The result is that the brand is mentioned in many problem scenarios, but it is consistently placed next to options that are not truly in the same tier.

In AIvsRank AI visibility, the client would not see only that the brand was mentioned. They would also see:

- which problem scenarios consistently include the brand

- whether AI has understood the brand as the wrong category

- which unfavorable comparison contexts the brand is entering as a result

- which competitors create real pressure in those contexts

That is why AI visibility cannot be reduced to mention counts alone. Brand understanding and competitive position have to be assessed together.

What Results the Client Ultimately Sees

For clients, AIvsRank AI visibility does not ultimately present itself as a loose set of numbers. It produces several result forms that can be used directly:

- which problem scenarios consistently include the brand and which scenarios leave it absent over time

- which scenarios cause the brand to be misunderstood, over-generalized, or placed into the wrong category

- which companies should be treated as core competitors and which should be treated as direct competitors

- which comparison contexts are jointly shaped by AI answers and external search-environment signals

- which problem direction deserves priority right now

That means the client receives not simply more data, but a set of outputs that is closer to actual business use.

What the Client Ultimately Gets Is Not a Loose Set of Metrics

From the client's perspective, the value of AIvsRank AI visibility is not that it breaks brand performance into more isolated metrics. It is that it organizes those results into a more usable judgment: whether the brand appears, whether it is understood correctly, whether it sits in a favorable competitive position, and what the team should prioritize next.

That is also a more important difference between AIvsRank and a simple AI mention-monitoring tool. The former helps clients identify absence, misinterpretation, and mispositioning earlier, and helps them decide which problem scenarios deserve priority. The latter is often better at showing what happened, but not necessarily at showing what should be fixed first.