How Fast Is AI Evolving?

AI has not just improved. It has changed shape.

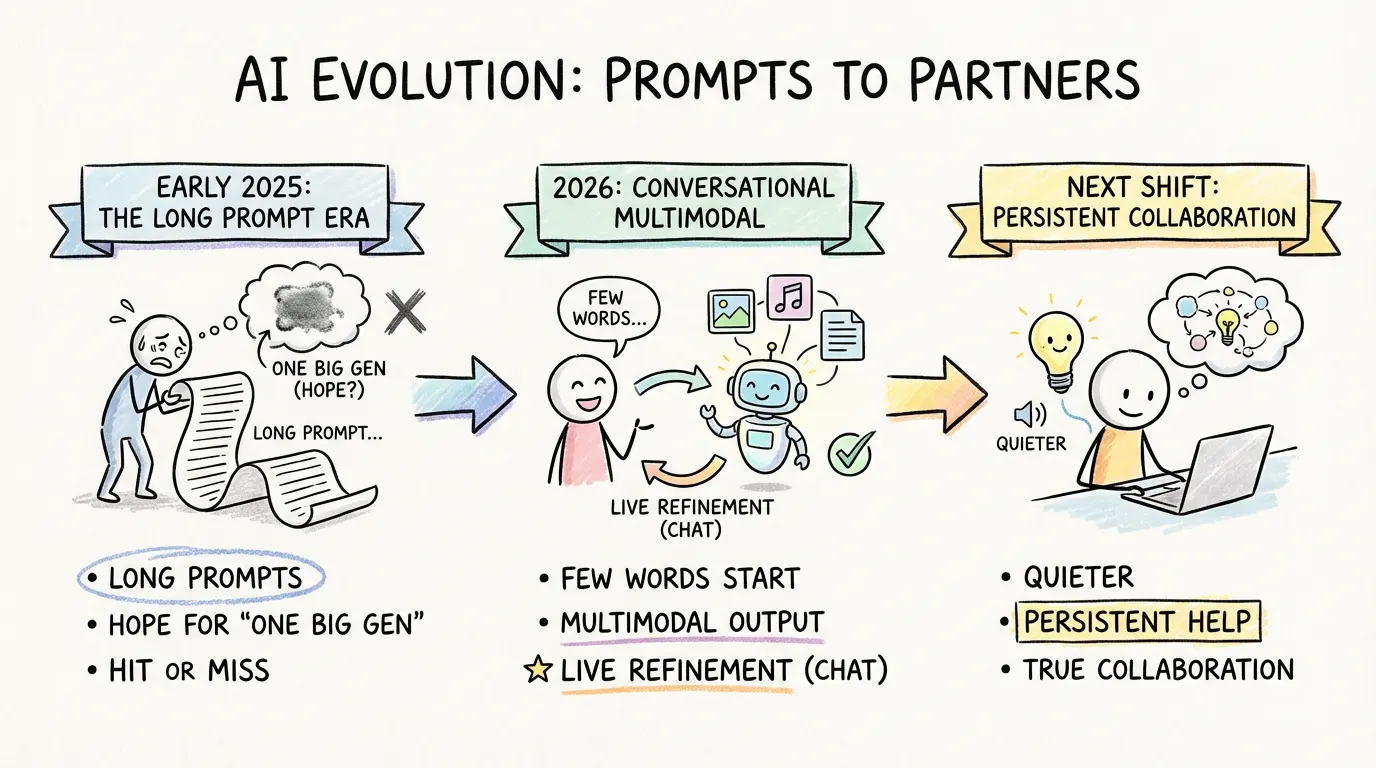

That distinction matters. In early 2025, a lot of serious users still treated AI as a system you had to manage through prompt architecture. If you wanted something close to your real goal, you often wrote a long brief, front-loaded every instruction, and tried to make the first output do as much work as possible. The prompt itself was half the product.

By 2026, that already feels dated.

Now the center of gravity has shifted toward short instructions, multimodal output, and live revision. You do not always need to explain the whole assignment up front. You can begin with a sketch, a sentence, an image, or even a vague intent, then shape the result through follow-up turns. In practice, the interaction feels less like issuing a command and more like steering a moving system.

That is the real story. AI is evolving so fast that the interface is starting to disappear.

In Early 2025, We Were Still Programming the Output

The first half of the current AI wave rewarded people who could think like prompt engineers. If you understood model behavior, you could get much better results than someone who just typed a casual request.

That gap was real. A strong user knew how to define tone, format, constraints, exclusions, examples, fallback rules, and output structure before the model wrote a single line. A weak user wrote one sentence, got generic output, and concluded the system was overrated.

So the workflow became obvious: if you wanted quality, you had to compress your intent into the opening instruction.

That is why long prompts exploded. People were not being verbose for fun. They were compensating for the limits of the interaction model. The system had weak memory, limited persistence, inconsistent control, and a strong tendency to round your request into something average. If you did not specify the shape of the answer in advance, you often paid for it later.

For writers, marketers, designers, and operators, this created a peculiar habit. You spent more time preparing the ask than reacting to the result. The prompt became a defensive wall against drift.

By 2026, The Prompt Has Started Losing Its Monopoly

The change is not that prompting stopped mattering. It is that prompting stopped being the only serious control surface.

By 2026, many users have already internalized a different pattern. Start smaller. Get a usable first pass. Correct it in motion. Add references. Swap modality. Tighten the angle. Ask for three variations. Remove one section. Change the visual direction. Keep the structure, but make it sound less polished. Turn this into slides. Now rewrite the opening. Now make the image less literal.

That workflow would have felt clumsy or unreliable not long ago. Today it feels normal.

The biggest improvement is not just model quality. It is interaction elasticity. The system can carry context better, recover from ambiguity faster, and move across text, image, layout, voice, and editing with less ceremony. The user no longer has to lock the whole request on turn one.

That lowers the cost of experimentation. It also lowers the emotional cost of getting started.

This may sound like a minor UX upgrade. It is not. It changes who can use AI well.

When the only path to quality runs through a long, carefully designed prompt, the system rewards specialists and power users. When the system becomes more conversational, more multimodal, and more revisable, the skill floor drops. More people can get something useful without first learning an entire prompting ideology.

The Real Leap Is Friction Collapse

People often describe AI progress as if the model simply got smarter. Sometimes that is true, but it misses the operational shift.

The larger change is friction collapse.

In early 2025, the common pattern was: specify everything, generate once, inspect the output, realize it missed a hidden requirement, then either rewrite the prompt or manually repair the result. The loop worked, but it was expensive. The system saved labor inside the task while still demanding a lot of orchestration around the task.

By 2026, the loop is shorter and softer. You can begin with less. The model can infer more. You can correct in place instead of rebuilding from scratch. A text task can become a visual task without changing tools. A rough answer can become a working asset through dialogue. The cost of refinement falls, so the system gets used for more things.

That is one reason the discussion around how to make AI-written content sound more human has changed as well. The problem is no longer just what the first draft sounds like. It is whether the user can keep shaping the draft until it starts to carry real intention.

This is also why so many people underestimate the speed of AI change. They compare outputs, but they do not compare interaction loops. The output in version B may only look somewhat better than the output in version A. Yet if version B takes half the setup, allows live correction, and moves across modalities, the practical difference is much larger than the surface sample suggests.

What This Changes for Real Work

The consequences are bigger than content generation.

For solo users, the gain is obvious: less setup, faster iteration, and lower activation energy. You can think with the system instead of briefing it like a contractor every single time.

For teams, the shift is more structural. It changes where work happens.

The older model pushed effort toward the front. Someone had to build the mega-prompt, define the output format, guess the edge cases, and try to get one clean shot. The newer model pushes effort toward steering and review. You can start from a lighter instruction, see where the model goes, and redirect without paying the full setup cost again.

That sounds small until you watch it happen across a week of real work. Research gets faster because the first pass appears earlier. Drafting gets faster because revision becomes interactive. Creative work gets looser because image, copy, and direction can move together. Decision-making gets faster because options can be generated, compared, and narrowed in one thread instead of across separate tools.

It is the same larger pattern behind the rise of AI in the real world. Once AI stops feeling like a fragile demo and starts behaving like a revisable working surface, it gets pulled deeper into everyday operations.

That is when adoption stops being a novelty curve and starts becoming a workflow curve.

The Next Step Will Probably Be Less Visible

So what comes after short prompts, multimodal output, and conversational revision?

Probably something quieter.

The next stage is likely not a dramatic jump from "chat" to some entirely alien interface. It is more likely a steady move from prompt-first interaction to goal-first systems.

In a prompt-first world, the user still carries most of the burden of decomposition. Even if the opening instruction is short, the user is still basically telling the model what to do next.

In a goal-first world, the user states the outcome and the system handles more of the hidden scaffolding. It gathers context, proposes a sequence, chooses a mode, asks for confirmation only when it matters, and keeps working memory alive across sessions. Instead of saying, "Write this, now summarize it, now make a visual, now clean the tone, now adapt it for email," the user says, "Turn this idea into a launch package," and the system handles more of the assembly.

That does not mean humans disappear from the loop. It means the loop becomes more supervisory and less procedural.

The most interesting future feature may not be better generation quality at all. It may be better continuity. Systems that remember your standards. Systems that understand recurring goals. Systems that know what kind of first draft you usually reject. Systems that notice when the real bottleneck is not wording but sequencing, approval, or comparison.

If that happens, prompt skill will matter less as a visible craft and more as an absorbed layer inside the product.

The Strange Part Is How Fast We Normalize It

Maybe the fastest part of AI evolution is not model improvement. Maybe it is human adaptation.

Each stage feels remarkable for a few months, then ordinary. Long prompts once felt advanced. Then they felt annoying. Multimodal generation once felt like a showcase feature. Now many users treat it as baseline product behavior. Live conversational editing once felt like a clever interface trick. Now it increasingly feels like the obvious way these systems should work.

That is why forecasting AI is so awkward. The near future arrives in public, but its meaning arrives late.

We tend to notice the technical jump first and the behavioral jump afterward. Only later do we realize that the real change was not "the model can now do X." The real change was "people no longer organize their work the old way because X became cheap."

That is the level where the next wave will matter too.

Final Takeaway

AI is evolving fast enough that the unit of progress is no longer just output quality. It is the amount of human setup the system removes before useful work begins.

In early 2025, many users still had to write long prompts because they were trying to lock the result before the model drifted. By 2026, a lot of value already comes from doing the opposite: starting light, moving across modalities, and correcting the result in real time. The interaction has become more fluid, more collaborative, and less ceremonial.

The next step will likely push that further. Fewer explicit prompts. More persistent context. More hidden orchestration. More systems that feel less like answer engines and more like working partners.

If that happens, the biggest AI skill will no longer be writing the perfect prompt.

It will be knowing what outcome is worth steering toward in the first place.